[Preview of Semicon Korea 2019 AI Summit]

The evolution of artificial intelligence (AI) semiconductors is largely divided into two directions. One is to serve functions on device surfaces and the other is to process large data through the cloud. The infrastructure, cloud, and device companies are trying to take the lead by strengthening competitiveness in edge devices. It is difficult to implement AI completely with only one of these aspects in and of itself, so it is necessary to have them operate as a group.

For example, Google and IBM are developing Tensor Processing Unit (TPU) and Truenorth chips, respectively, with plans to implement AI on the cloud infrastructure. Companies such as Baidu and Facebook use general-purpose graphics processing units (GPUs) with higher parallel computing capabilities than central processing units (CPUs). On the other hand, Intel and Qualcomm are developing AI semiconductors for devices that users use directly.

Connecting the two camps is 5G (5G) mobile communication. 5G is not only fast in terms of data transfer rate but also has low latency compared to the 4th generation (4G) Long Term Evolution (LTE). It can be applied to new applications such as autonomous vehicles by improving response speed. However, it causes difficulties if a network suddenly breaks. That is why Intel argues that autonomous vehicles, especially 5G, are not essential for safety. It is possible that data can be played back and forth, such as receiving high-resolution maps and downloading content, but self-driving cars must also be able to make safety judgments and prevent accidents.

It is also important that consumers should be able to experience AI the right away. This is why Samsung Electronics, Apple, Intel, Qualcomm, and Hi-Silicon are competing to introduce NPUs in their application processors. The NPU mimicking the brain neural network has a circuit structure optimized for repeated machine learning. When the NPU is installed on the smartphone AP, the learning, video, image, and speech recognition performance can be improved. Differentiation is possible because the user experience (UX) can be enhanced to a new level.

To achieve this, the performance of each element of a semiconductor must be improved while power consumption must be lowered. This means that they must have system-on-chip (SoC) capabilities. Movidius, a subsidiary acquired by Intel, has released Myriad X, a preprocessor (VPU) System-on-Chip (SoC) Pre-order X with built-in depth-of-field neural network analysis (DNN) acceleration technology. Recently, Neural Compute Stick 2, which plugs into a PC without a cloud equipped with this chip, has also been unveiled. Compared to the first product, Neural Compute Stick, the processing speed is improved by 8 times and the power consumption is reduced to 1 watt (W).

The Samsung Galaxy S10, which will be unveiled in February, is equipped with an enhanced NPU for the EXINOS 9 (9820), of which performance is about 7 times better than the previous version (9810). When you take a picture, the AI instantly recognizes the data such as the subject, the place, and the brightness of the surroundings to automatically set the optimum values. It can realize augmented reality (AR) or virtual reality (VR) services by quickly and accurately grasping the characteristics of a person or object.

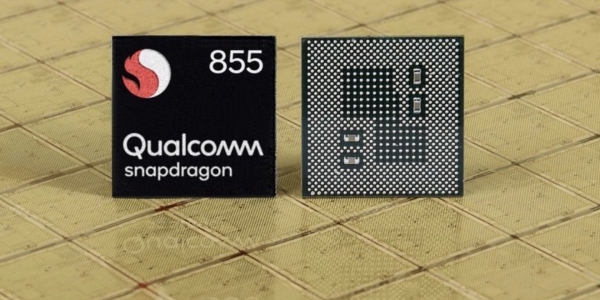

Qualcomm has integrated a 4th-generation AI engine with a CPU, GPU, a digital signal processor (DSP), and an image signal processor (ISP) on the Snapdragon 855. It can conduct mathematical operations 7 times per second. This is three times faster than Snapdragon 845.

The semiconductor industry is expected to grow with the coexistence of high-capacity and high-speed AI chips on the cloud infrastructure and Edge AI computing chips that enable AI operations on devices immediately. This means that cloud and edge device AI semiconductors are complementary to each other and grow together.